So this is being written during Week 3 (I’m quite behind but it’s a good thing I promise). I was swirling around this week trying to pin point exactly what I wanted to get at and I realised the topic I had chosen was just so vast and so huge that I could only go surface deep. I wanted something to recognise my internal frustrations at my constant ad blocking only to get similar (if not the same) type of product advertised in it’s place.

I’ve realised (after an amazing discussion with Jamie and Ben) that in a similar way to Alice down the rabbit hole I’ve just spiralled and don’t know my own direction. I need to refocus, carry on looking at Social Media and ad marketing but just narrow my own focus and rethink about my strategy. Discussing the topic with these two was fab, they really helped me clarify that I want to focus on the algorithms and bias of ad marketing and that this is the basis of my initial thoughts.

It made me reconsider how I use Insta or other social media sites and perhaps consider the inherent bias that occurs on a daily basis, for all of us. I’m being placed into a biased bracket every single day based on my age, gender, job etcetc… This terrifies me but I totally get that companies and businesses need to direct their marketing to relevant sources so as not to waste time and money.

BUT, through all of this, our little social bubbles become smaller and less varied, meaning what we see is a fraction of what we’ve followed. Because of what we’ve typed into google late at night, what we mindlessly scroll past is just another subconcious reminder. Is this limiting our creativity? How can we branch out and find new things if everything suggested is based on what we’ve already seen or have expressed our interest in. Algos are now around us in everything we do, every place we visit, everything we even say.

I know now my new direction will be into researching algos and coding and perhaps reflect how censored and blinkered our personal “social bubble” really is. My other half is studying Computing and IT and we’ve already discussed how I can use his knowledge to really visualse this issue. It’s an exciting new direction and I’m so glad I had that chat with Ben and Jamie to really focus on what I want to achieve.

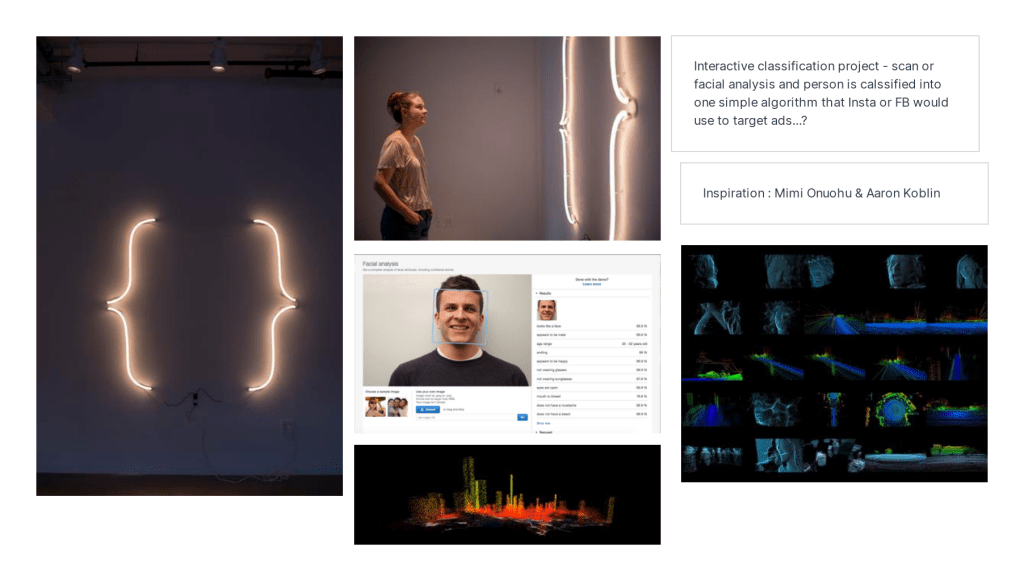

Moodboard 1 : Interactive

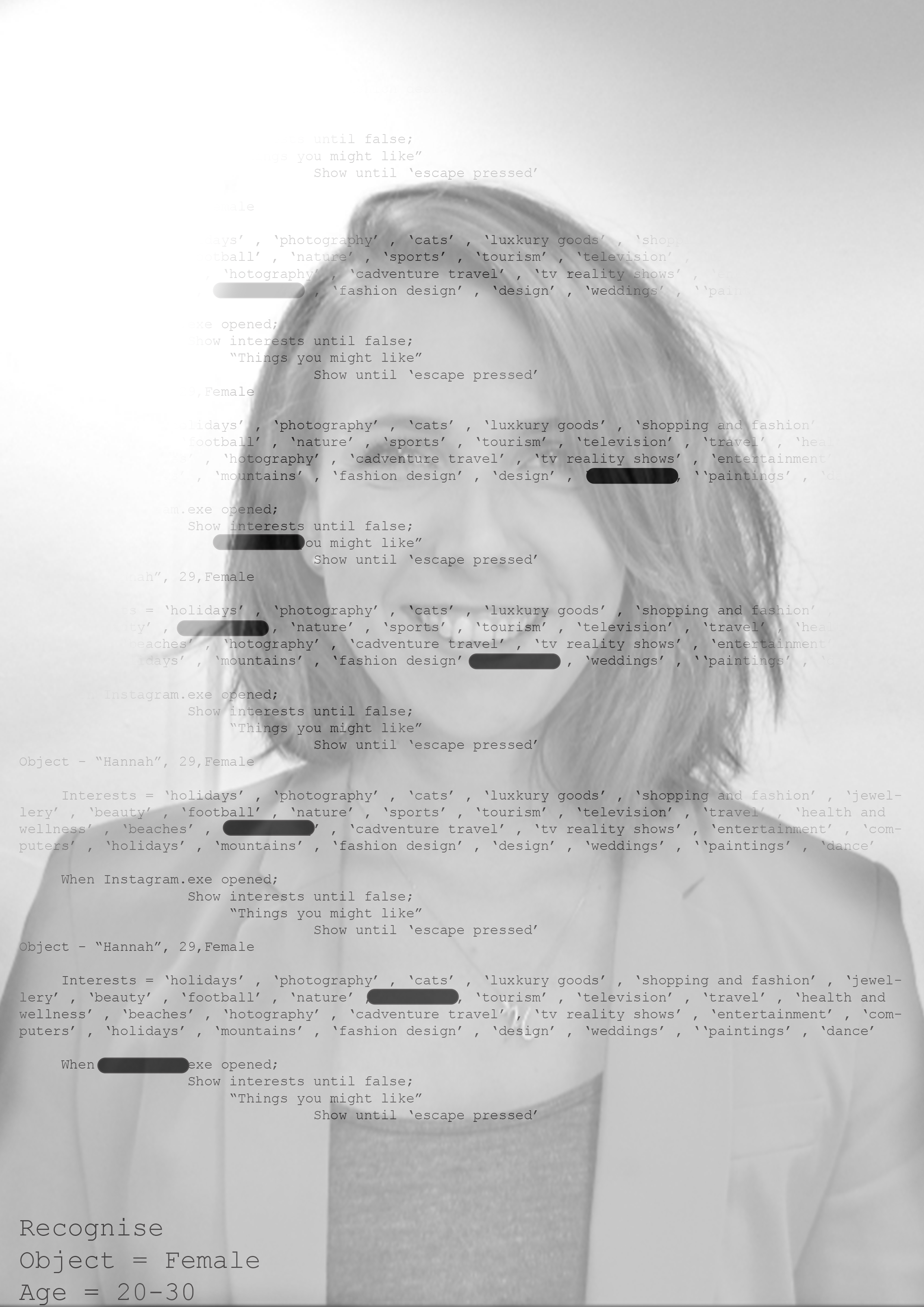

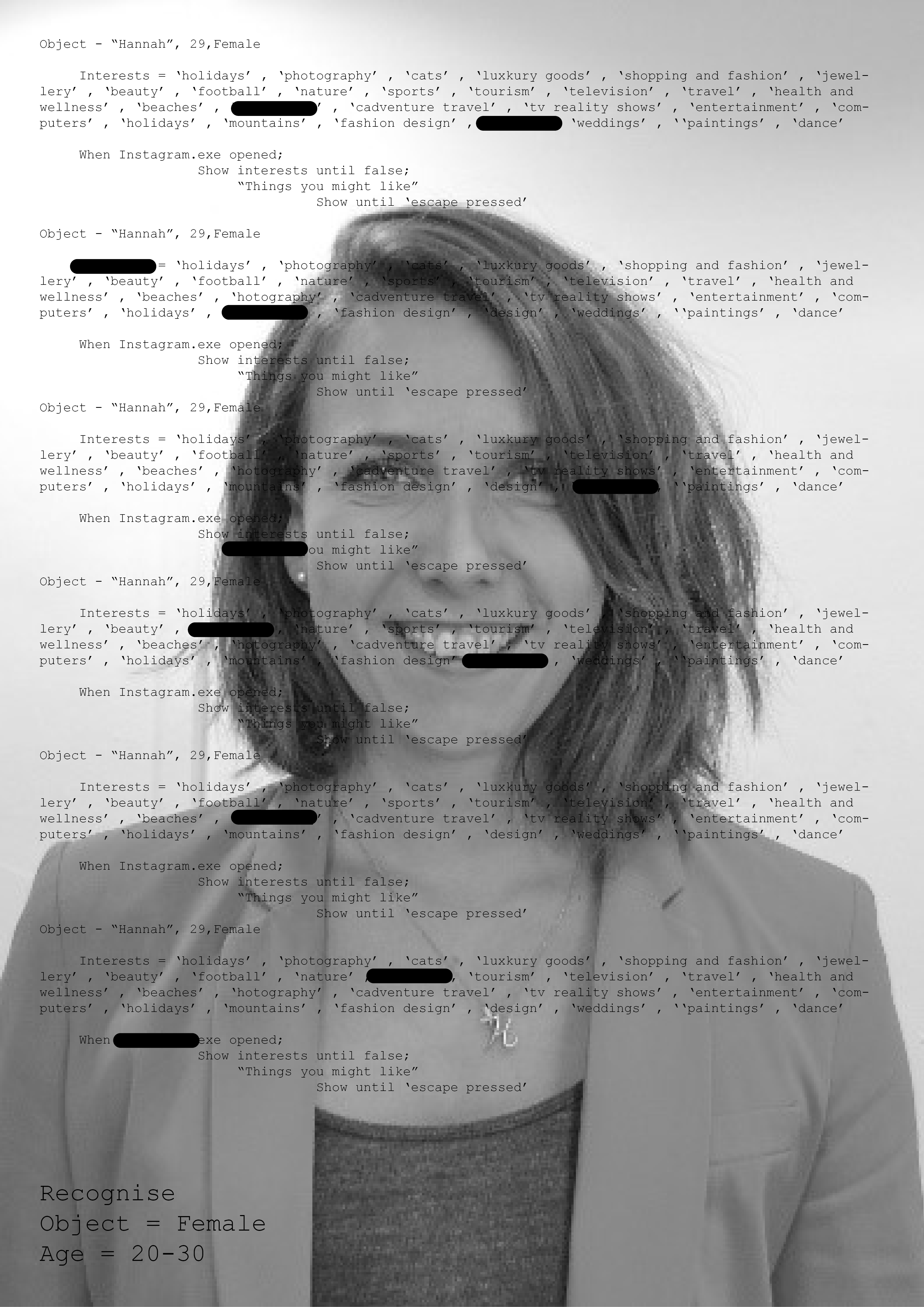

So this first moodboard is one possible way of representing what I’m trying to portray – I guess if I wasn’t writing this in Week 3 and had an unlimited time for this project I’d 100% go for this – my intention would be to have some facial recognition software or facial scanner that would assess the person standing in front of the scanner and would create an algorithm for that individual. The purpose here would be to reflect how a computer sees us and translates our interests and personality into a long sheet of illegible code/algorithms. It would be such an impactful and meaningful exhibition. Mimi Onuohu did something very similar – her camera would scan two people stood side by side and the brackets would light up if it recognised them as similar – processing the categories the computer was given and alloting these people in the same one if they matched – eg. female, similar face shape etc. Aaron Koblin also covers a lot of interactive data visualisation which I guess this is, his work really helped me focus on how the coding doesn’t need to be understood, just accurate and presented in a way that both worked and was recognised.

Manipulating the Ads

My inspo here was totally taken by Adam Ferriss – his work is just an amazing representation of the current world we live in. He takes every day images and manipulates them to reflect our current societal standing. I think it’s so powerful and makes such a statement. Especially his other work too which i’ve linked below. His work is the kind where you could spend hours looking at it! Personally, I think this wouldn’t have the right impact that I’m hoping for – I would screenshot all the ads I come across throughout a day or week and process them digitally to create an “Alice in Wonderland”esque sense of overwhelming vastness. It would represent the sheer number of ads we are bombarded with every day and in my mind I find the whole process a bit busy and not clear enough with the visual communication.

Algorithms&Coding

So I don’t have many visual references for this – predominantely because they are ALL the same! I am learning coding and how to write algorithms alongside my fiance who is studying Computing and IT. From this point of view, it purely represents how a computer acknowledges us as humans in a really visual way. It’s confusing to the average person, it’s a jumble of numbers and letters, but behind it all, those numbers and letters sum up exactly who we are – what we Google late at night, the takeaways we prefer, the people we stalk on Instagram… It’s terrifying but beautiful, I see such an incredible dystopian beauty around coding and algorithms and I want to utilise this in some way. I think perhaps I need to make it look a little different to every other piece of “futuristic” coding design…

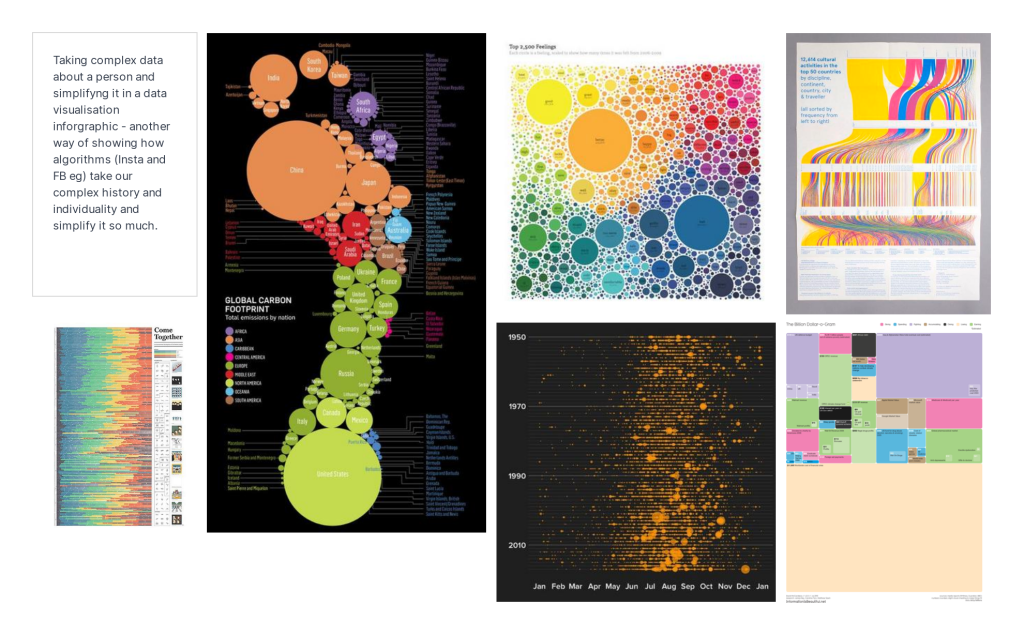

Data Visualisation

I loved finding the images for this mood board – I think intense data collection and visualisation is incredible (I know, geeky)… But to take immense and vast amounts of data and visualise it in a way that clear, legible and understood is a huge feat. It’s something that I’ve wanted to try for a long time after researching David McCandless. He’s such a genius in taking the mundane and turning it into a readable set of data. It’s also amazing how other designers have taken something we all know (a Beatles song) and turned it into a visual set of symbols and numbers that are understood and even funny. I think it’d be a really great way of showing how we as humans interact with social media and how the computers see us. I do, however, think this would be way too big a task for my current time frame.

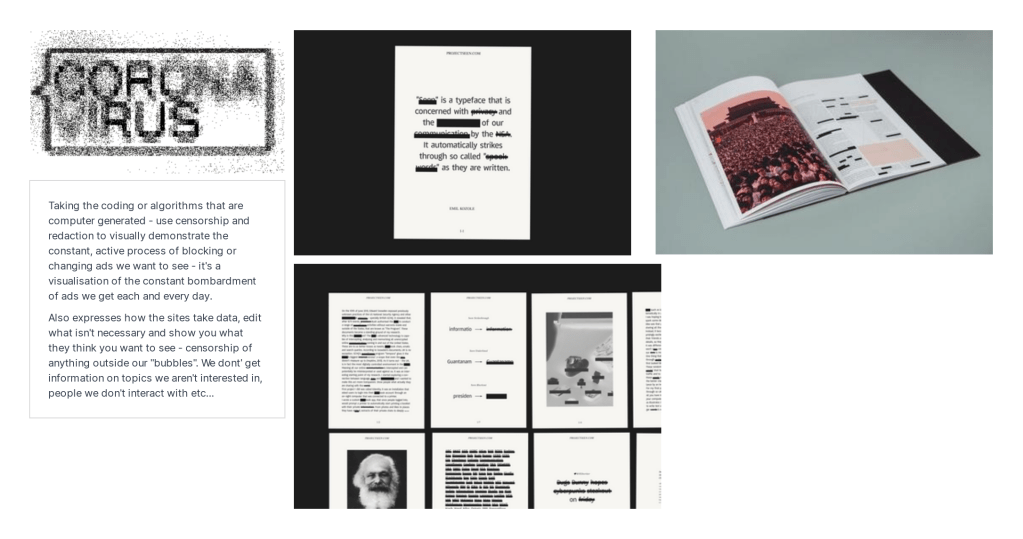

Censorship

Censored and redacted documents was something I touched on in my previous week’s blog about looking into how we are “allowed” to see the world as the computers let us. I wanted to find a way to weave this in to my project this week because I think on a daily basis we are being censored in what we can and can’t see or interact with. Instagram’s algorithms edit the content we get to see, Google shows us the webpages that fit with our existing digital footprint, Facebook only shows te posts of those we interact with. They see it as “necessary filtering” so we only see what we want to see. But this creates a very dangerous, limited and blinkered social existence. In a world where we debate less face to face, they are “protecting” us from seeing something that we don’t agree with. AND this is based on what we’ve looked at before and liked or interacted with. So we can never expand our social media bubble and look further than what they allow us to see. Combining the idea of censorship/redaction with the algorithms is definitely the way I want to proceed.

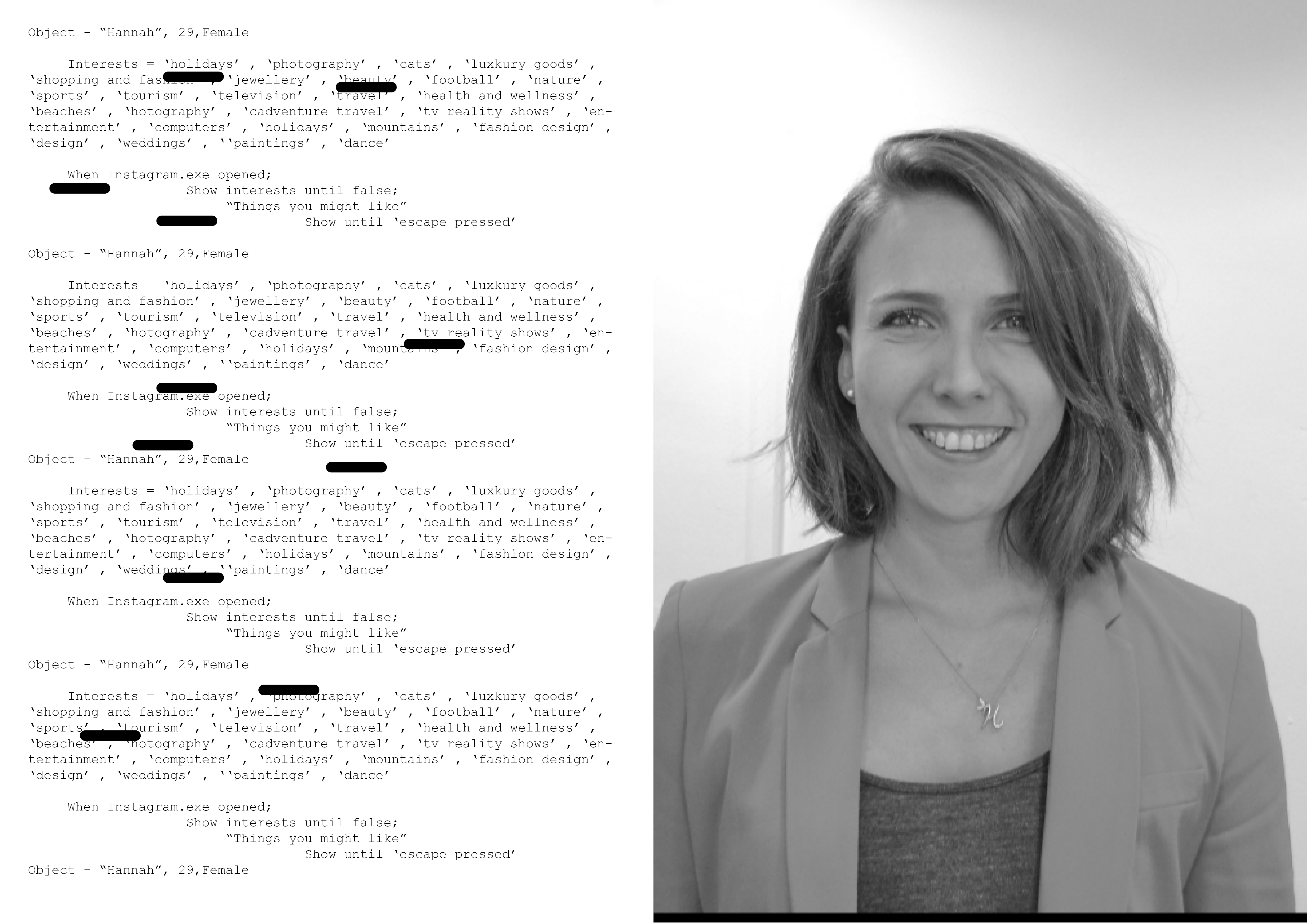

5 initial rough visuals* (rough being the operative word)

I’ll go into more detail below about my final outcome and expectation but I will be using portraits taken of my family and friends, combining the portraits with a “usable” code taken from their “ad interests” listed on instagram using Insta’s own algorithm which has generated this list of ad interests. I just love how Instagram (taken as the main social media site in this instance) just generates a list of my personal “likes” and favourite things and this is how the site sees me. I’m just a list of words the site can use to target adverts in my direction and hook me in.

My Final Direction & Official Question (finally)

In all, there is an inherent bias to targeted ads – I’ve witnessed this first hand being a white, 29 yr old female, despite my constant blocking or removing of certain beauty adverts or clothing ads that I don’t want to see, I still get them – because I fit into that “category”. Like Mimi’s work, we are just classified on a daily basis and this is the foundation of the content we’re shown. The piece will be combined of 3 parts to each portrait, *Algorithms*, *Censorship* and *Categorisation*… Each person will provide me with their Instagram generated list (with their approval of course, GDPR and all) and I will represent this data as a “usable” written code. The daily censorship we are facing will be represented with redacted parts to the coding. All this will be combined with the portrait – we are individuals but are limited to just a list of code at the end of the day. We can go against this and say we’re obviously so much more and we expereience so much more day to day – but do we?? We’re constantly on our phones, surrounded by computers, consuming the information fed to us by the media – all curated specifically for us and our interests.

“How can Graphic Design raise awareness of our online identities and changing nature of social media censorship?”

Reflection

There was a lot of self reflection and criticality involved in this week’s work and I did struggle with this. I’ve also recognised my design process and paths I take which I need to work on and recognise in future before I take a project too far. I’m keen to see how this develops over time from a personal point of view. I’m also looking forward to the next two weeks of this project.

Bibliography

MIMI ỌNỤỌHA. (n.d.). Classification.01 — MIMI ỌNỤỌHA. [online] Available at: http://mimionuoha.com/classification01 [Accessed 28 Jan. 2021].

Fong, E. (2018). Unmasking algorithmic bias with interactive art. [online] Medium. Available at: https://blog.codingitforward.com/unmasking-algorithmic-bias-with-interactive-art-97a787265a38 [Accessed 28 Jan. 2021].

Dribbble. (n.d.). Alyssa Foote. [online] Available at: https://dribbble.com/alysssssssssa [Accessed 28 Jan. 2021].

alyssafoote.com. (n.d.). WIRED — Alyssa Foote. [online] Available at: https://alyssafoote.com/WIRED-4 [Accessed 28 Jan. 2021].

Staff, W. (2019). The Real Reason Tech Struggles With Algorithmic Bias. [online] WIRED. Available at: https://www.wired.com/story/the-real-reason-tech-struggles-with-algorithmic-bias/.

the Guardian. (2020). Student who wrote story about biased algorithm has results downgraded. [online] Available at: https://www.theguardian.com/education/2020/aug/18/ashton-a-level-student-predicted-results-fiasco-in-prize-winning-story-jessica-johnson-ashton [Accessed 28 Jan. 2021].

Pilkington, E. (2019). Digital dystopia: how algorithms punish the poor. [online] the Guardian. Available at: https://www.theguardian.com/technology/2019/oct/14/automating-poverty-algorithms-punish-poor.

Awario Blog. (n.d.). Social media algorithms on every platform: explained (with marketing applications). [online] Available at: https://awario.com/blog/social-media-algorithms-explained/ [Accessed 28 Jan. 2021].

Wired. (n.d.). Why Social Media Companies Frown on “Gaming the Algorithm.” [online] Available at: https://www.wired.com/story/platforms-gaming-algorithm/ [Accessed 28 Jan. 2021].

Eskandari, A. (2020). Social media: The manipulation algorithm. [online] Medium. Available at: https://medium.com/@alieskandari21/social-media-the-manipulation-algorithm-46d00f89c191 [Accessed 28 Jan. 2021].

Hern, A. (2017). Facebook and Twitter are being used to manipulate public opinion – report. [online] the Guardian. Available at: https://www.theguardian.com/technology/2017/jun/19/social-media-proganda-manipulating-public-opinion-bots-accounts-facebook-twitter.

After Digital. (n.d.). Algorithms, bubbles and social manipulation. [online] Available at: https://afterdigital.co.uk/blog/algorithms-bubbles-and-social-manipulation/ [Accessed 28 Jan. 2021].

http://www.itsnicethat.com. (n.d.). A typeface that censors itself by CSM grad Emil Kozole. [online] Available at: https://www.itsnicethat.com/articles/emil-kozole-seen [Accessed 28 Jan. 2021].

http://www.itsnicethat.com. (n.d.). From writing shader code to creating a jellyfish dataset, Adam Ferriss discusses his experimental digital practice. [online] Available at: https://www.itsnicethat.com/articles/adam-ferriss-shader-code-jellyfish-dataset-digital-170220 [Accessed 28 Jan. 2021].

Beautiful, I. is (n.d.). The Billion Dollar-o-Gram. [online] Information is Beautiful. Available at: https://informationisbeautiful.net/visualizations/the-billion-dollar-o-gram-2009/ [Accessed 28 Jan. 2021].

amf.fyi. (n.d.). Social Media Got you Down? Be More Like Beyonce — Adam Ferriss. [online] Available at: https://amf.fyi/Social-Media-Got-you-Down-Be-More-Like-Beyonce.